This week it seemed like a good idea to take a look at some of the most highly cited AI papers. Back during week 81 of this journey into writing on Substack, I took a look at some of the most highly cited ML papers [1]. I was expecting a lot more overlap, but was pleasantly surprised at the differences. One of the papers really stood out based on the total number of citations and it’s up first. Intellectually I can accept that a paper has more than 100,000 citations, but in practice that is an awful lot of references for an academic paper to have and a representation of a degree of asynchronous interaction between researchers that helps bring the intellectual space called the academy to life.

Papers with over 100,000 citations:

Kingma, D. P., & Ba, J. (2014). Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980. https://arxiv.org/pdf/1412.6980.pdf

Papers with over 50,000 citations:

Ren, S., He, K., Girshick, R., & Sun, J. (2015). Faster r-cnn: Towards real-time object detection with region proposal networks. Advances in neural information processing systems, 28. https://proceedings.neurips.cc/paper/2015/file/14bfa6bb14875e45bba028a21ed38046-Paper.pdf

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., ... & Polosukhin, I. (2017). Attention is all you need. Advances in neural information processing systems, 30. https://proceedings.neurips.cc/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf

Papers with over 20,000 citations:

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A. A., Veness, J., Bellemare, M. G., ... & Hassabis, D. (2015). Human-level control through deep reinforcement learning. nature, 518(7540), 529-533. https://daiwk.github.io/assets/dqn.pdf

Ioffe, S., & Szegedy, C. (2015, June). Batch normalization: Accelerating deep network training by reducing internal covariate shift. In International conference on machine learning (pp. 448-456). PMLR. http://proceedings.mlr.press/v37/ioffe15.pdf

Papers with over 10,00 citations:

Silver, D., Huang, A., Maddison, C. J., Guez, A., Sifre, L., Van Den Driessche, G., ... & Hassabis, D. (2016). Mastering the game of Go with deep neural networks and tree search. nature, 529(7587), 484-489.

Arjovsky, M., Chintala, S., & Bottou, L. (2017, July). Wasserstein generative adversarial networks. In International conference on machine learning (pp. 214-223). PMLR. http://proceedings.mlr.press/v70/arjovsky17a/arjovsky17a.pdf

Kipf, T. N., & Welling, M. (2016). Semi-supervised classification with graph convolutional networks. arXiv preprint arXiv:1609.02907. https://arxiv.org/pdf/1609.02907.pdf

Without question these levels of citation are an indication that the works are being read and actively referenced within the scholarly community. I’m referencing the citation numbers here to give you a sense of scale when it comes to considering the AI community and how many people are researching and considering the things happening in this space. This is a very crowded and vibrant place in the academy where a lot of time and effort are going into moving things along toward building very real and deployable technology in this space. Given the sheer volume of people working in this space it’s only a matter of time before somebody will shout “Eureka!” and we see practical deployments in production which will influence our daily lives.

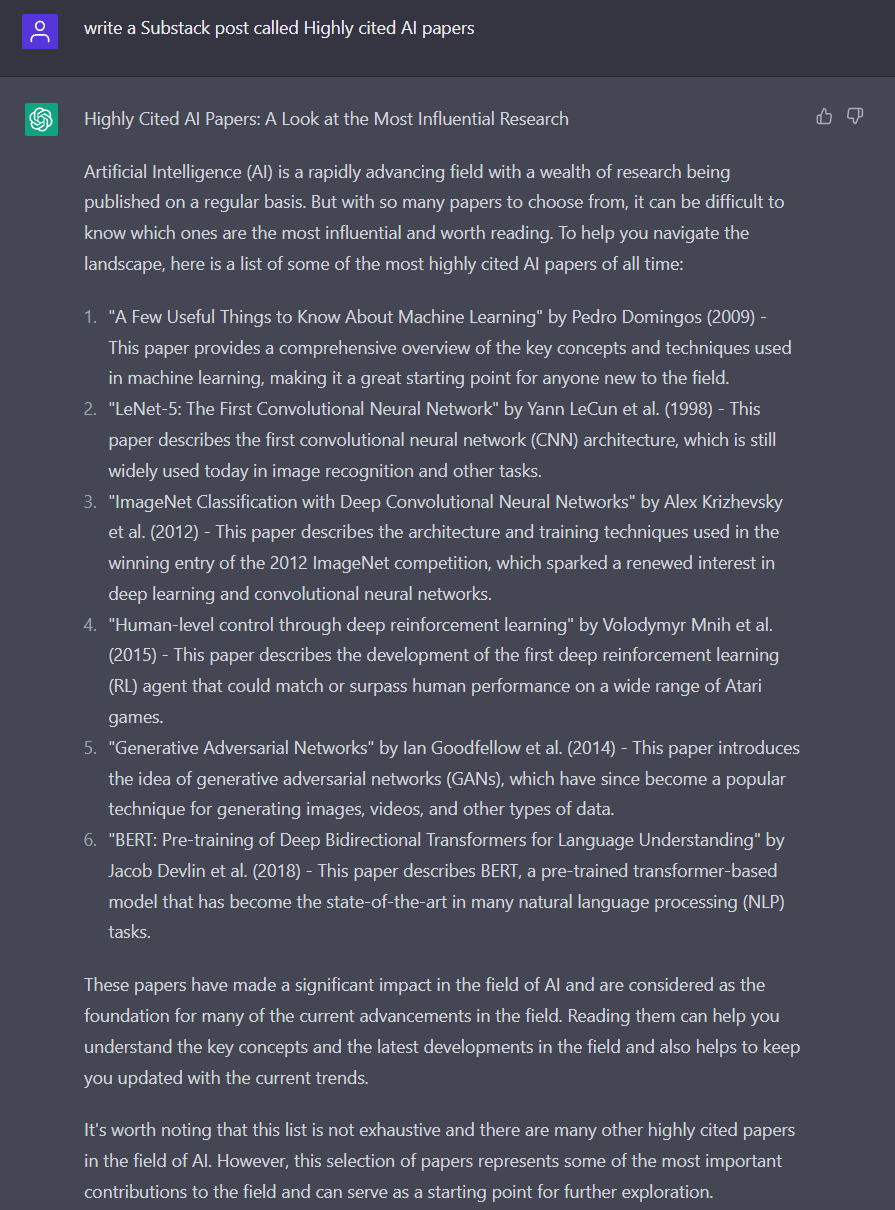

What would ChatGPT create?

If you were wondering what ChatGPT from OpenAI would have generated with the same prompt, then you are in luck. I had that output generated over at https://chat.openai.com/chat by issuing a prompt.

Links and thoughts:

Top 5 Tweets of the week:

Footnotes:

[1] Week 81 of The Lindahl Letter:

What’s next for The Lindahl Letter?

Week 108: Twitter as a company probably would not happen today

Week 109: Robots in the house

Week 110: Understanding knowledge graphs

Week 111: Natural language processing

Week 112: Autonomous vehicles

If you enjoyed this content, then please take a moment and share it with a friend. If you are new to The Lindahl Letter, then please consider subscribing. New editions arrive every Friday. Thank you and enjoy the year ahead.

Lindahl, N. (2023). The Lindahl letter: 104 Machine Learning Posts. Lulu Press, Inc. https://www.lulu.com/shop/nels-lindahl/the-lindahl-letter-104-machine-learning-posts/ebook/product-y244ep.html

Highly cited AI papers